1. The “Frame-Level” Search Reality

By 2026, the barrier between “Text Search” and “Visual Search” has vanished.

- The Tech: Gemini 1.5 doesn’t need a transcript to know what’s in your video. It uses Temporal Frame Analysis to identify objects, brand logos, and even the emotional sentiment of the presenter.

- The Shift: If you’re showing a “How-to-Cook Kosha Mangsho” video, the AI identifies the ingredients on the counter in Real-Time and uses that data to answer a user’s query about “Where to buy authentic spices in Kolkata.”

2. OCR Optimization: Making On-Screen Text “Machine-Readable”

In 2026, the text inside your video is as important as your meta-tags.

- The “Clear Font” Mandate: AI crawlers use OCR to read your on-screen titles. Use bold, high-contrast sans-serif fonts (like Arial or Helvetica).

- The 3-Second Rule: To ensure an AI “samples” a clear frame of your key point, that text must remain on-screen for at least 2 to 3 seconds.

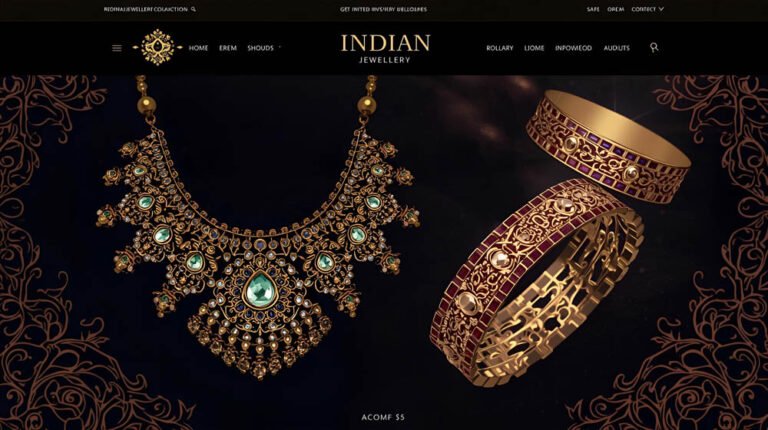

- Actionable Tip: If you’re a jewelry brand in Bowbazar, ensure your “916 Hallmark” or “Price per Gram” is clearly visible in a steady shot. The AI will extract this as a “Verified Fact” to cite in a price-comparison answer.

3. “Audio Bolding” and Narrative Extraction

AI engines “listen” for cues.

- The Concept: Just as you bold text for humans, you must “bold” your audio for AI.

- The Technique: Use a short pause before and after your most important claim. Example: “Our sweets are made with… [Pause] …100% organic Nolen Gur from Joynagar. [Pause]” * Why it works: This cadence change tells the AI’s “Tokenization” engine that this is a key segment worth extracting as a Featured Snippet.

4. Comparison: Traditional Video SEO vs. 2026 Visual GEO

| Feature | Old School (2024) | 2026 Visual GEO |

| Discovery | Keywords in Title/Tags. | Object & Action Recognition in Frames. |

| Indexing | Relies on the SRT/Transcript. | Parses Audio/Video directly & simultaneously. |

| Visuals | Decorative / Aesthetic. | Data-Bearing (Infographics, Labels). |

| Ranking Goal | “Watch Time” & “CTR.” | “Citable Fact Density” & “Entity Match.” |

| Accessibility | Alt-text for the blind. | Metadata for the Machine. |

5. The “Visual Anchor” Strategy for Kolkata Retail

To get cited by Perplexity or Gemini’s “Shopping Mode,” your products need Visual Anchors.

- 360-Degree Spatial Mapping: When filming a product in your Park Street showroom, rotate it slowly. This allows AI models to build a “3D spatial understanding” of the item from 2D frames.

- Contextual Staging: Don’t just show a sofa. Show a sofa in a living room with a view of the Howrah Bridge in the background (or a digital equivalent). The AI “anchors” the product to a specific Entity (Kolkata), making you more relevant for local queries.

6. Technical Visual Schema: The “VideoObject” 2.0

In 2026, basic schema isn’t enough. You need Nested Technical Data.

- Clip Markup: Manually define the “Key Moments” in your YouTube videos. This tells the AI exactly where to “Seek-to-Action.”

- HasPart Property: Use this to define specific “Chapters” within your video as independent “Fact Blocks.”

- Speakable Schema: Mark the specific sections of your video script that are “summarization-friendly” for voice assistants like Gemini Live.

7. FAQ: Optimizing for the AI “Eye”

- Q: Does resolution matter for SEO?

- A: Yes. While 4K isn’t mandatory, OCR accuracy drops significantly below 1080p. If the AI can’t read your labels, it won’t cite your data.

- Q: Will AI ignore my video if I use a “Smash Cut” editing style?

- A: It might. Rapid-fire editing (TikTok style) is great for humans but hard for AI to “Ground.” For technical or educational content, use a “Slow TV” approach with deliberate pans.

- Q: How do I optimize my Instagram Reels for AI Search?

- A: Use Native Captions (the ones built into the app). Google now indexes public Instagram content, and it prioritizes videos where the metadata matches the visual OCR.

Conclusion: The Camera is the New Keyboard

In 2026, you aren’t just “filming a video”; you are building a dataset. Every frame you publish is an opportunity to provide a “Visual Fact” that an AI engine can use to solve a user’s problem. For Kolkata brands, this is the ultimate chance to prove authenticity in a world of AI-generated fakes.

At our Alipore studio, we specialize in “Multimodal Discovery.” We don’t just produce beautiful videos; we produce “Search-First” visual assets that Gemini and Perplexity are “trained” to love.

Is your video content “Blind” to AI?

Let’s do a “Multimodal Audit.” We’ll run your existing YouTube and Instagram content through a 2026 AI-Parser to see what “Facts” the machines are actually seeing—and where you’re leaving visibility on the table.